How Meta's Adaptive Ranking Model Revolutionizes Ad Serving at Scale

Meta's Adaptive Ranking Model bends the inference scaling curve for LLM-scale ad serving, delivering +3% conversions and +5% CTR on Instagram.

Meta continues to push the boundaries of AI-driven recommendation systems, this time tackling a critical challenge: serving LLM-scale models for real-time ad ranking without compromising on speed or cost. The resulting Adaptive Ranking Model effectively bends the inference scaling curve, ensuring sub-second latency while delivering superior ad performance. Below, we answer key questions about this breakthrough.

1. What is the inference trilemma in large-scale ad recommendation systems?

The inference trilemma describes the fundamental struggle to balance three competing demands when scaling AI models for real-time services: increased model complexity, strict low-latency requirements, and cost efficiency. For platforms like Meta's ads system, which serves billions of people globally, this tension becomes acute. Adding more parameters and deeper learning improves understanding of user intent but dramatically increases compute and memory needs. However, the system must still respond in under a second, and costs must remain manageable. Traditionally, this forced trade-offs—either simplify the model and lose accuracy, or accept higher latency and expenses. The trilemma has been a barrier to leveraging LLM-scale intelligence in production recommendation systems, until now.

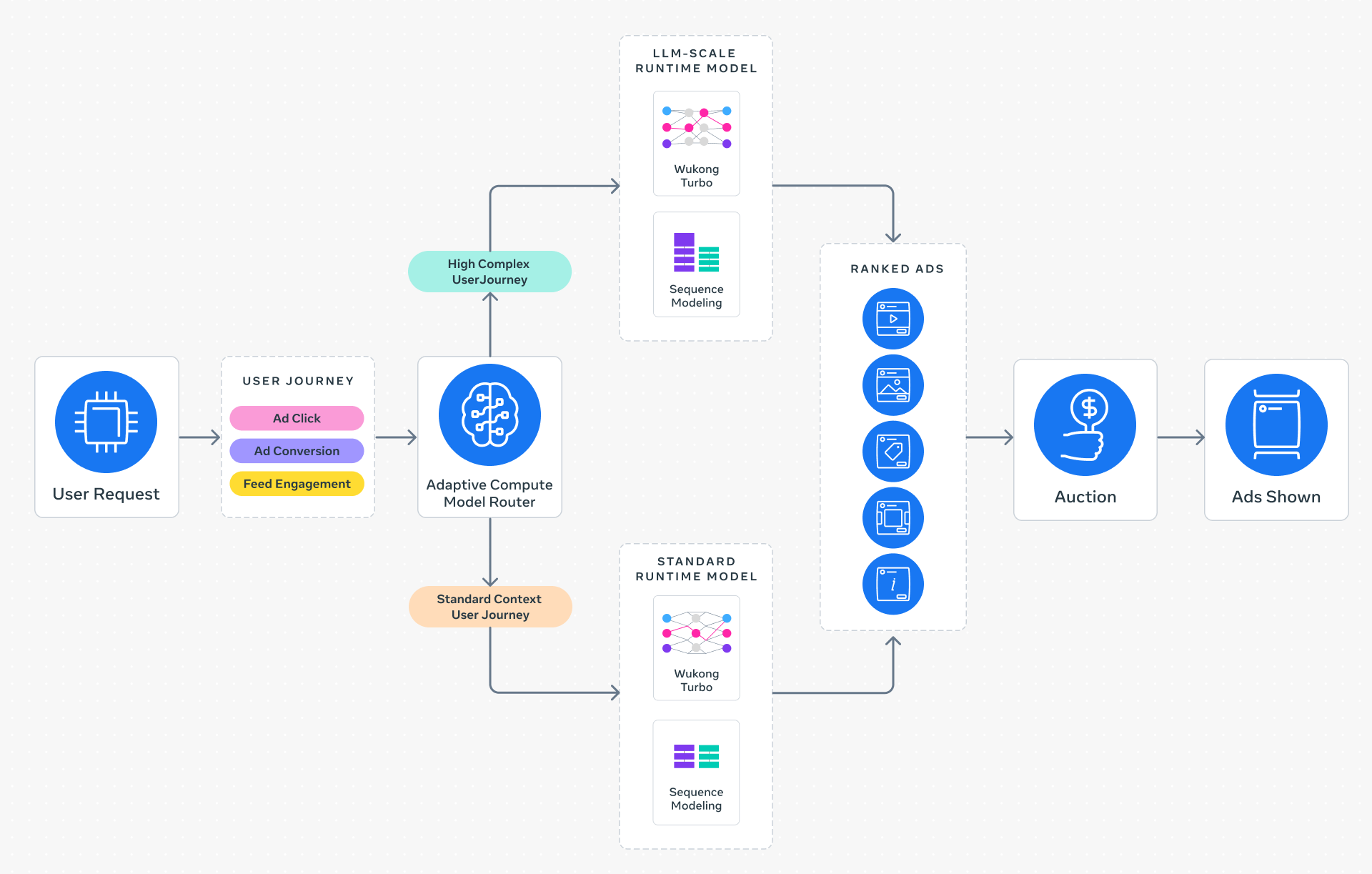

2. How does the Meta Adaptive Ranking Model overcome the inference trilemma?

The Adaptive Ranking Model replaces the outdated "one-size-fits-all" inference approach with intelligent request routing. Instead of running the same massive model for every ad request, the system dynamically aligns model complexity with a rich understanding of each person's context and intent. For simpler requests, a lighter, faster model is used, while more complex queries trigger the full LLM-scale model. This ensures every request is served by the most effective and efficient model possible. By bending the inference scaling curve, the system maintains the strict sub-second latency that Instagram and other Meta platforms depend on, while still delivering high-quality, personalized ad experiences. The result is a significant leap in both performance and computational efficiency.

3. What are the three key innovations behind Meta's Adaptive Ranking Model?

Meta's solution rests on three pillars: inference-efficient model scaling, model/system co-design, and reimagined serving infrastructure. First, by shifting to a request-centric architecture, the system serves LLM-scale models at sub-second latency, enabling deeper understanding of interests without compromising user experience. Second, hardware-aware model architectures are developed to align model design with underlying hardware capabilities and limitations, improving utilization across heterogeneous environments. Third, leveraging multi-card architectures and hardware-specific optimizations, the system enables O(1T) parameter scaling, allowing unprecedented efficiency in serving large-scale runtime RecSys models. These innovations together resolve the tension between complexity and efficiency, making LLM-scale ad serving practical for the first time.

4. How does inference-efficient model scaling work in this system?

Inference-efficient model scaling is achieved through a request-centric architecture that departs from traditional batching approaches. Instead of aiming for uniform model size for all inputs, the system dynamically adapts based on the request's complexity and the user's context. For straightforward ad queries, a smaller, faster sub-model handles the task, preserving system resources. For complex, high-intent requests, the full LLM-scale model engages to provide nuanced understanding. This adaptive routing ensures that compute cycles are spent where they deliver the most value. Additionally, the model itself is designed with efficient attention mechanisms and sparsity, reducing per-request computation. The result is the ability to serve a model with LLM-scale intelligence for every user, but only expending the necessary cost per request, thereby bending the inference scaling curve upward in efficiency.

5. How does Model/System Co-Design improve hardware utilization?

Model/System Co-Design involves creating model architectures that are tailor-made for the underlying hardware. Meta's team analyzes the strengths and limitations of their servers, GPUs, and network topology, then designs the model's layers, tensor operations, and memory access patterns to maximize throughput and minimize bottlenecks. For example, certain operations are refactored to align with chip-level vector instructions, and memory layouts are optimized to reduce latency. This approach also accounts for heterogeneous hardware environments—where different chips or generations coexist—by dynamically selecting the most efficient hardware path for each request. The result is significantly better utilization of available silicon, reducing idle cycles and energy waste. This enables the system to scale to O(1T) parameters while maintaining cost efficiency, a critical factor for global deployment.

6. What are the real-world results of the Adaptive Ranking Model on Instagram?

Since its launch on Instagram in Q4 2025, the Adaptive Ranking Model has delivered measurable improvements for advertisers and users alike. For targeted users, ad conversions increased by 3%, and ad click-through rates rose by 5%. These gains come without increasing system-wide computational costs or sacrificing latency—the system still operates within sub-second response times. The efficiency improvements mean Meta can serve LLM-scale intelligence to billions of users every day, providing advertisers with better ROI and users with more relevant ad experiences. The success on Instagram paves the way for broader deployment across Meta's family of apps, promising continued innovation in recommendation systems.

7. How does this system benefit advertisers and users?

Advertisers see a significant increase in conversion rates and click-through rates, as the system's deeper understanding of user intent matches ads more precisely to individual interests. This higher relevance leads to better campaign performance without requiring additional spend. For users, the ad experience becomes less intrusive and more valuable, as they see ads that align with their current context and needs. The system maintains privacy standards and does not compromise user data. Ultimately, the Adaptive Ranking Model creates a win-win scenario: advertisers achieve higher ROI, while users enjoy a more seamless and personalized platform experience. This balance is critical for maintaining engagement and trust in a global service.